Archive

Forget SPAM, why not backdoor software instead

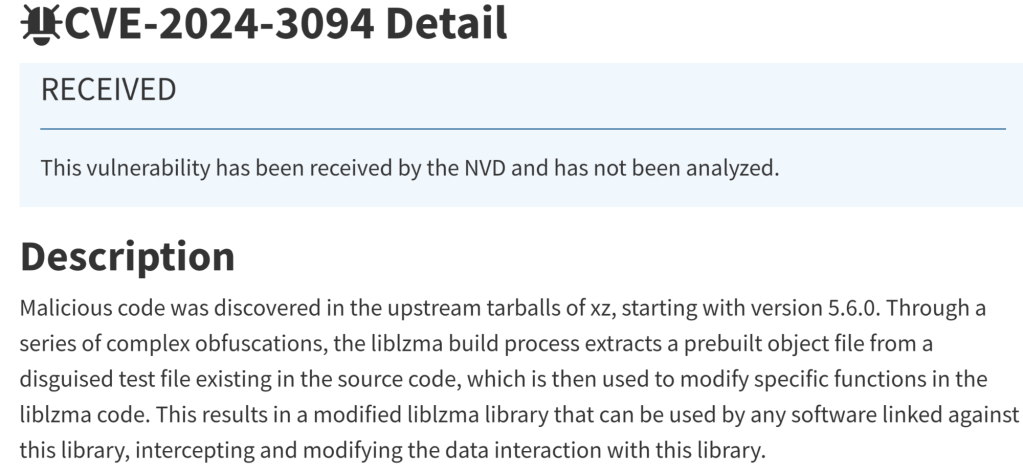

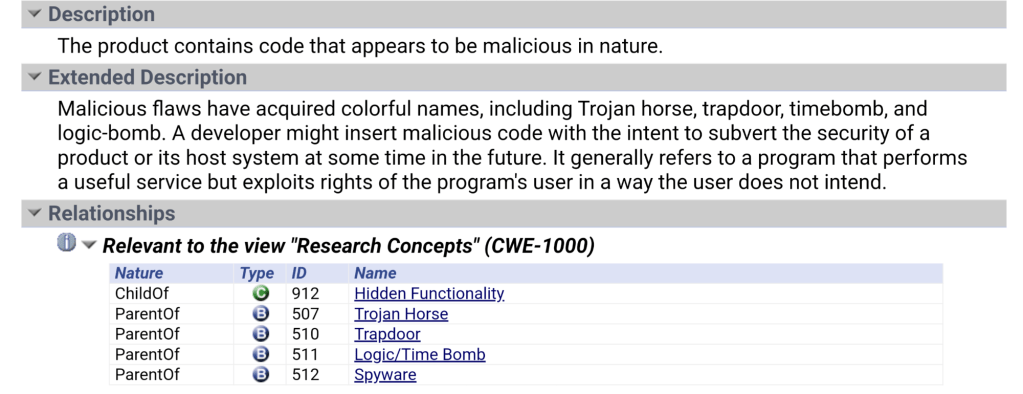

Open Source software is used by so many people, that it has become the target for a more sophisticated attacker. One that is so pernicious, it is known, in the Common Weakness and Exposure chart as CWE-506.

Let’s face it, over the last few decades, as the world was being eaten by software* we became too reliant on other people’s work. We ‘trusted’ what others were doing and relied too much on ‘good people doing great things’ so we could focus more on what we wanted.

The strength of open source (where so many eyes would review the code instead of just a handful of coders in a cave), makes us dependent on those same coders so if they don’t catch the malicious additions, who will?

Luckily, in such a short time, a few posts by a couple of curious sysadmins on Thursday, managed to catch this ‘feature’ being added to the source, which quickly produced this CVE (above) so patch your stuff before you too, become backdoored.

For my readers, I can only say that, if you use software in your business (that should be *all* of you), then you probably use open source so ‘Verify then Trust’ because this happens more often than you realize. Invest in your Security program which should include a threat analysis channel. We are at War with the hacker community and they only need one to win!

Old tech for an old Techy

How many of you remember the days of the Pentium processors? What about the 386 when we used 30 pin SIMMs (1mb shown here) and this 5 pin DIN adapter for the PS2 keyboard?

I think that piece of memory actually cost me $25 in 1980s dollars! Computers have come a long way since the early days.

Cloud makes it easier to use but remember, it also makes it easier for the hackers 😜.

WSL Christmas with Fedora

Hey folks – Merry Xmas.

For those of you playing with the windows subsystem for Linux, I wanted to share a great recipe and the steps required for baking your very own Xmas Fedora Docker running on WSL. It makes a great holiday gift to share with your friends and family (because who doesn’t use containers these days!)

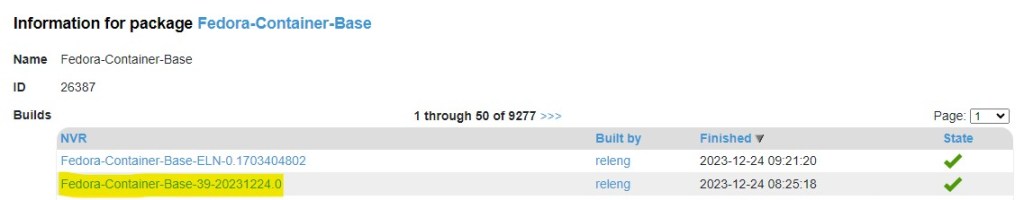

- Download your new base image from Koji at https://koji.fedoraproject.org/koji/packageinfo?packageID=26387

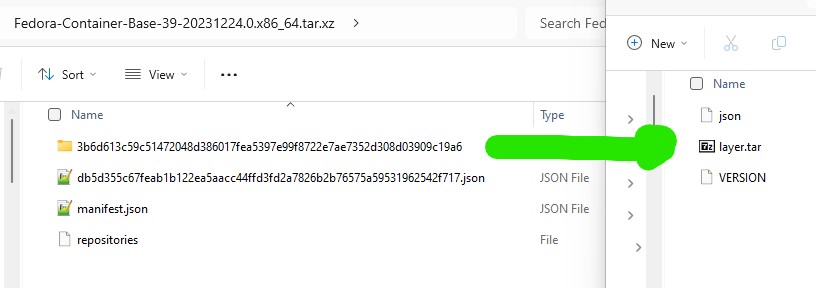

2. Carefully open the package and extract the file called layer.tar

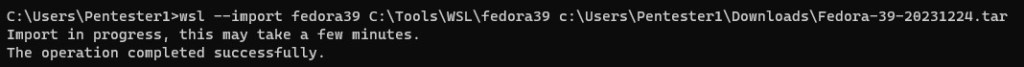

3. Mix well for a few seconds (here we have renamed the layer.tar file and used ‘fedora39’ as the image name but you may adapt this ‘to your own taste’

4. Bake in the oven for a few minutes (here we start the distro, perform an update and then install systemd)

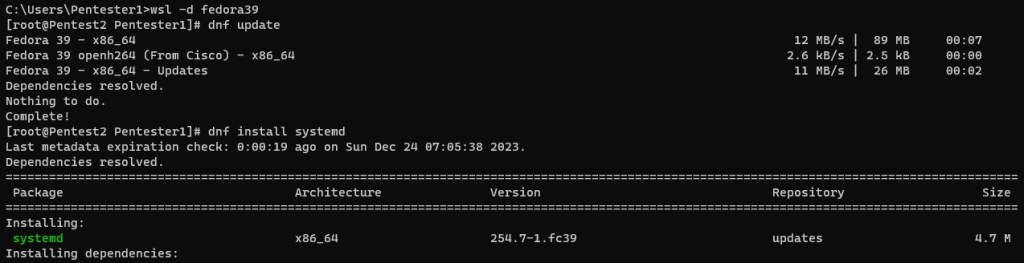

5. Decorate it with a custom file to activate systemd and let it cool down (restart)

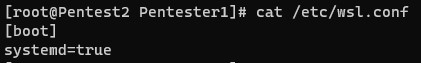

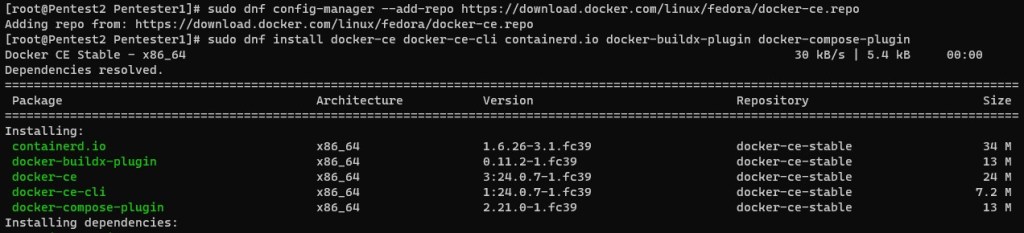

6. Package your cake with the following items (dnf-plugins-core)

7. Add the docker community repository for docker binaries and install the following components (docker-ce, docker-ce-cli containerd.io, docker-buildx-plugin and docker-compose-plugin)

That is it – Serve to friends and family!

Happy Holidays to all my readers and thanks for a great year!

Build/Maintain your own golden container base images

Containers have become essential in the optimization of software delivery these days for any business. They can support the principals of least privilege and least access by removing most of the attack surface associated with exposing services for public consumption. They are the smallest unit that make up the 4Cs’ (CNCF uses this term to describe Cloud, Cluster, Container and Code) and have become an important part of Kubernetes management. Stripping away the complexity and isolation benefits makes them portable and it almost seems as though they have no downside right? Containers (and Kubernetes) are ephemeral and support the idea of a fully automated workload but we don’t patch them like we used to. So how do we ensure that the inevitable vulnerabilities that arise (daily if not weekly) can also be mitigated or even remediated? You start over (and over) again and again by using the ‘latest and greatest’ base images. To understand this process, we need to compare the strategy of traditional software deployment strategies and see how they differ.

First there was the base OS build where we deployed a operation System and struggled to keep it updated. We applied patches to the OS to replace any software components that needed to be replaced. Many organizations struggled with patching cadence when the fleet of systems grew to large to manage. The speed of patching needed to increase as more and more vulnerabilities were found which presented a challenge for larger organizations.

Containers start with a very small base image to provide some of the libraries that are necessary for the code that was deployed with the image. Developers need to actively minimize the components that are necessary for some core capabilities (like openssl for https, glibc for os file and device options, etc.) Failure to minimize the base image used can results in adding more and more of the libraries needed rather than relying on the benefits of a shared kernel. Best practices require the understanding of the OS being used so that the image size can be smaller and the attack surface can be reduced. This results in less vulnerabilities introduced at the container level which can result in a longer runtime using that container image.

In support of this model, it is suggested that we consider how to maintain an approved (secure) base image for any container development so our deployment strategy can make use of secure (known NOT vulnerable) images to start from. The OS manufacturers are always releasing patched versions of their base image file-systems complete with the updated components. If we consider how to turn those updated base OS images into approved secure base images, the benefits provided can increase our productivity while reducing our attack surfaces.

The process proposed here can help us obtain and build base images that have a unique hash associated with them. Since container filesystems (ausfs, overlay) can be fingerprinted, we can validate the base image hash through the entire release life-cycle. This provides an added layer of detection against rogue container use and can provide an early warning detection mechanism for both development as well as operations teams. Detecting who is using a known vulnerable base image can provide notification to be sent to application owners until those vulnerable images are removed from all of our systems.

Let me show you how this can be accomplished for any of the base images that should be approved for consumption. We start by using the ‘current build’ for any of the base OS images that we want to use. (Remember, whether your nodes run RedHat, Debian, Ubuntu, Oracle, etc. to gain the best performance and to make the best use of resources, your choice of base OS should match your node runtime version. Lets grab the latest version of the Jammy base OS for amd64 – I will use podman to build my OCI compatible image but we can also do this with docker.)

Step 1 (we should repeat this whenever there is a major change in this release. The vendor will update this daily)

root : podman import http://cdimage.ubuntu.com/ubuntu-base/jammy/daily/current/jammy-base-amd64.tar.gz jsi-jammy-08-22

Downloading from "http://cdimage.ubuntu.com/ubuntu-base/jammy/daily/current/jammy-base-amd64.tar.gz"

Getting image source signatures

Copying blob 911f3e234304 skipped: already exists

Copying config 4d667a55fb done

Writing manifest to image destination

Storing signatures

sha256:4d667a55fbdefddff7428b71ece752f7ccb7e881e4046ebf6e962d33ad4565cf

(Notice the hash of the base container image above. MY image was already downloaded)

Step 2 (we save the image archive now as a container to be tested)

root : podman save -o jsi-08-22.tar –format oci-archive jsi-jammy-08-22

Copying blob bb2923fbc64c done

Copying config 4d667a55fb done

Writing manifest to image destination

Storing signatures

(we are using the name of the image and the date [mm/yy] to identify it. You may also use image tags but it is best practice to use unique naming)

Step 3 (lets save some space and compress it)

root : gzip -9 jsi-jammy-08-22.tar – ( results in the image named jsi-jammy-08-22.tar.gz)

Final step is to run it though a security scan to ensure there are no high or critical vulnerabilities contained in this base image.

C:\image>snyk container test oci-archive:jsi-jammy-08-22.tar.gz

Testing oci-archive:jsi-jammy-08-22.tar.gz…

✗ Low severity vulnerability found in tar

Description: NULL Pointer Dereference

Info: https://snyk.io/vuln/SNYK-UBUNTU2204-TAR-2791257

Introduced through: meta-common-packages@meta

From: meta-common-packages@meta > tar@1.34+dfsg-1build3

✗ Low severity vulnerability found in shadow/passwd

Description: Time-of-check Time-of-use (TOCTOU)

Info: https://snyk.io/vuln/SNYK-UBUNTU2204-SHADOW-2801886

Introduced through: shadow/passwd@1:4.8.1-2ubuntu2, adduser@3.118ubuntu5, shadow/login@1:4.8.1-2ubuntu2

From: shadow/passwd@1:4.8.1-2ubuntu2

From: adduser@3.118ubuntu5 > shadow/passwd@1:4.8.1-2ubuntu2

From: shadow/login@1:4.8.1-2ubuntu2

✗ Low severity vulnerability found in pcre3/libpcre3

Description: Uncontrolled Recursion

Info: https://snyk.io/vuln/SNYK-UBUNTU2204-PCRE3-2799820

Introduced through: pcre3/libpcre3@2:8.39-13ubuntu0.22.04.1, grep@3.7-1build1

From: pcre3/libpcre3@2:8.39-13ubuntu0.22.04.1

From: grep@3.7-1build1 > pcre3/libpcre3@2:8.39-13ubuntu0.22.04.1

✗ Low severity vulnerability found in pcre2/libpcre2-8-0

Description: Out-of-bounds Read

Info: https://snyk.io/vuln/SNYK-UBUNTU2204-PCRE2-2810786

Introduced through: meta-common-packages@meta

From: meta-common-packages@meta > pcre2/libpcre2-8-0@10.39-3build1

✗ Low severity vulnerability found in pcre2/libpcre2-8-0

Description: Out-of-bounds Read

Info: https://snyk.io/vuln/SNYK-UBUNTU2204-PCRE2-2810797

Introduced through: meta-common-packages@meta

From: meta-common-packages@meta > pcre2/libpcre2-8-0@10.39-3build1

✗ Low severity vulnerability found in ncurses/libtinfo6

Description: Out-of-bounds Read

Info: https://snyk.io/vuln/SNYK-UBUNTU2204-NCURSES-2801048

Introduced through: ncurses/libtinfo6@6.3-2, bash@5.1-6ubuntu1, ncurses/libncurses6@6.3-2, ncurses/libncursesw6@6.3-2, ncurses/ncurses-bin@6.3-2, procps@2:3.3.17-6ubuntu2, util-linux@2.37.2-4ubuntu3, ncurses/ncurses-base@6.3-2

From: ncurses/libtinfo6@6.3-2

From: bash@5.1-6ubuntu1 > ncurses/libtinfo6@6.3-2

From: ncurses/libncurses6@6.3-2 > ncurses/libtinfo6@6.3-2

and 10 more...

✗ Low severity vulnerability found in krb5/libkrb5support0

Description: Integer Overflow or Wraparound

Info: https://snyk.io/vuln/SNYK-UBUNTU2204-KRB5-2797765

Introduced through: krb5/libkrb5support0@1.19.2-2, adduser@3.118ubuntu5, krb5/libk5crypto3@1.19.2-2, krb5/libkrb5-3@1.19.2-2, krb5/libgssapi-krb5-2@1.19.2-2

From: krb5/libkrb5support0@1.19.2-2

From: adduser@3.118ubuntu5 > shadow/passwd@1:4.8.1-2ubuntu2 > pam/libpam-modules@1.4.0-11ubuntu2 > libnsl/libnsl2@1.3.0-2build2 > libtirpc/libtirpc3@1.3.2-2ubuntu0.1 > krb5/libgssapi-krb5-2@1.19.2-2 > krb5/libkrb5support0@1.19.2-2

From: adduser@3.118ubuntu5 > shadow/passwd@1:4.8.1-2ubuntu2 > pam/libpam-modules@1.4.0-11ubuntu2 > libnsl/libnsl2@1.3.0-2build2 > libtirpc/libtirpc3@1.3.2-2ubuntu0.1 > krb5/libgssapi-krb5-2@1.19.2-2 > krb5/libk5crypto3@1.19.2-2 > krb5/libkrb5support0@1.19.2-2

and 8 more...

✗ Low severity vulnerability found in gmp/libgmp10

Description: Integer Overflow or Wraparound

Info: https://snyk.io/vuln/SNYK-UBUNTU2204-GMP-2775169

Introduced through: gmp/libgmp10@2:6.2.1+dfsg-3ubuntu1, coreutils@8.32-4.1ubuntu1, apt@2.4.6

From: gmp/libgmp10@2:6.2.1+dfsg-3ubuntu1

From: coreutils@8.32-4.1ubuntu1 > gmp/libgmp10@2:6.2.1+dfsg-3ubuntu1

From: apt@2.4.6 > gnutls28/libgnutls30@3.7.3-4ubuntu1 > gmp/libgmp10@2:6.2.1+dfsg-3ubuntu1

and 1 more...

✗ Low severity vulnerability found in glibc/libc-bin

Description: Allocation of Resources Without Limits or Throttling

Info: https://snyk.io/vuln/SNYK-UBUNTU2204-GLIBC-2801292

Introduced through: glibc/libc-bin@2.35-0ubuntu3.1, meta-common-packages@meta

From: glibc/libc-bin@2.35-0ubuntu3.1

From: meta-common-packages@meta > glibc/libc6@2.35-0ubuntu3.1

✗ Low severity vulnerability found in coreutils

Description: Improper Input Validation

Info: https://snyk.io/vuln/SNYK-UBUNTU2204-COREUTILS-2801226

Introduced through: coreutils@8.32-4.1ubuntu1

From: coreutils@8.32-4.1ubuntu1

✗ Medium severity vulnerability found in perl/perl-base

Description: Improper Verification of Cryptographic Signature

Info: https://snyk.io/vuln/SNYK-UBUNTU2204-PERL-278908

Introduced through: meta-common-packages@meta

From: meta-common-packages@meta > perl/perl-base@5.34.0-3ubuntu1

--------------------------------------------------------

Tested 102 dependencies for known issues, found 11 issues.

——————————————————————————-

(Look Ma, no high or critical findings!)

Now we have a base OS image ready to be used with any new/existing container build process. Best practices include the ability to digitally sign these images so that build pipelines can verify that any images being included are tested and approved. We can remove the previous version of the base OS image and provide a notice to current/future users that vulnerabilities have been found in the previous version. Dev teams can bump the version in any code they have and begin to test if there are any breaking changes that would require refactoring. Even if there is no change in the code, they must release their containers using these new base OS images to mitigate any vulnerabilities that are introduced.

After the breach…

Accidents happen and in the security field, they are usually called an ‘0-day’.

There are (at least) three questions you may be asked by your board, about your AppSec program…

- Was all the software tested using all of our controls & capabilities that were applicable?

- Did all the findings that were produced measure below our acceptable risk ratings?

- Were any/all of the vulnerabilities being fixed according to our accepted remediation timelines?

Lets unpack that for everyone in an attempt to understand the motivations of some of our brightest ‘captains’. (If I was a board member…)

Misinformation – Does this event signal a lack of efficacy of our overall Appsec program? Do the controls work according to known practices? Perhaps, this is an anomaly, an edge case that now requires additional investment? What guarantees do we have that any correction strategy will be effective? If changes are warranted, which part should we focus on, People, Process or Technology?

Jeff says – changing the program can take a large investment for any/all of these. Get back to the basics and start with some metrics to see if you have effective coverage first. Prioritize making policy/configuration visible for each implementation of your security tools and aim for all of your results in one tool.

Liability – Is our security assessment program effective enough? Does this blind spot show us the inability to understand/avoid these threats at scale? Does this event indicate a systemic failure to detect/prevent this type of threat in the future?

Jeff says – Push results from Pentesting/Red Team/Security Ops back into the threat model and show if/how any improvement can be effective. Moving at the speed of DevOps means running more tests, more often, and correlating the findings to show value through velocity by catching and fixing them quickly.

Profit and Loss – Do we have a software quality problem that may require us to consider an alternative resource pool? If digitization is increasing in cost due to loss, maybe we need to improve our control capabilities to detect/prevent bad software from reaching production? Maybe we should take additional steps to ensure we have the right development teams to avoid mistakes?

Jeff says – to stop the bleeding, you might consider a different source of secure code? You might also consider an adjustment to your secure training programs? Maybe your security analysts are having their own quality issues? Consider raising the threshold of approved tools to be considered? Broker communication for your dev teams to take on more of the security responsibility.

For any leadership who is dealing with CyberSecurity these days, these are all very good questions. Security is Hard, Application Security, Cloud Security, Data Security – they are ALL hard individually so how does any one person/team understand them entirely?

I began to ask myself that question almost a decade ago during my mobile penetration testing period. When Facebook had created React which involved more than one software language in the same project. I found a cross site scripting flaw in the mobile client during testing which I felt pretty confident was NOT a false positive. I decided to check the static code findings to see if this could be correlated. (We can save the rest of that story for another blog post).

A light went off in my head, ‘correlation between two or more security tools in a single pane of glass’. What an idea – you need something that can pull in all of the datasets (finding reports) and provide some deduplication (so we don’t give dev teams multiple findings from multiple tools), just the fact that we are confident of the viability of the finding. I investigated some of the tool vendors and worked with them for a few years while the capability began to mature in the industry.

Today, Gartner calls this space Application Security Orchestration and Correlation, a contraction of ‘security orchestration’ (where you apply policy as code) and correlating, deduping the results. When done successfully, it also provides a single pane of glass for the operations team or any other orchestration or reporting software in use in your org. Think of it as the one endpoint with all the answers; a way to abstract away the API schema and various life-cycle changes that are associated with new and existing tool-sets.

Whether you wish to interconnect all of your existing orchestration tooling for your pipelines & other infrastructure or perhaps you want to build out your security governance capabilities by conducting all of your own security testing, ASOC tools are capable of providing security at the speed of DevOps.

There really is no other way to accomplish it at scale!

Infosec not your job but your responsibility? How to be smarter than the average bear

Want to measure how beneficial it is for your software development teams to learn to think more like an adversary? Just look at the first 20 years of use against the last 10-20?

https://www.theregister.com/2022/07/25/infosec_not_your_job/

Web servers are still vulnerable…

In a survey published on an often referenced support site for developers (Stack Overflow), they recently confirmed that JavaScript is the most popular programming language for the 6th year in a row. Almost 70% of the respondents claim that they visit searching for help on this subject so it may not come as a surprise that JavaScript is also the primary cause of vulnerabilities on websites today.

In a blog post from the vendor that brings us one of the most popular tool for hacking websites and finding vulnerabilities, Portswigger writes a great article in which they detail a number of methods that can be used to abuse JavaScript and to bypass cross site scripting mitigation by most frameworks.

There are thousands of ways that can be used to bypass XSS in websites and web developers should already know this. XSS is the number one method to compromise a browser which, in combination with privilege escalation can allow an attacker to take over your computer. Even script kiddies can capture session tokens or cookies from websites without proper security controls that can be used to login as you without even knowing your password. Here is a list of the risks in order of importance for an attacker;

- Account hijacking

- Credential stealing

- Sensitive Data Leakage

- Drive by Downloading

- Keyloggers/Scanners

- Vandalism

Don’t ignore these risks on your websites, public facing or not. If you login to a website often in your organization and it is vulnerable to cross site scripting, teach your users how to identify security risks that could be used to harvest credentials and expose them to malicious attacks. You may also want to make sure that your sites are tested to ensure they are not vulnerable to this type of attack. With Phishing attacks being the number one method that pentesters gain access to your organization, xss is the primary method being used.

Playing with ASLR for Linux

I recently completed a certification with SANS where we were taught to create our own exploits. A well behaved program should have a start procedure called a prologue, clean up and maintain itself and any objects it creates and finally an end. During our testing we assumed that Address Space Layout Randomization (ASLR) was turned on in order for our exploits to be successful but this is not always the case.

All programs have two different sections (instructions and data) and they need to be flagged differently in the memory. A section where only normal data is stored should be marked as non-executable. Executable code that does not dynamically change, should be flagged as read-only.

One of the tricks that hackers use to get control is to hijacking the stack pointer (a road map for where to go next). Evil programs are designed to perform a redirection in order to run their own malicious code. When any program is running in memory, if we want to protect it against evil programs we can use ASLR. Address space layout randomization is based upon the low chance of an attacker guessing the locations of randomly placed areas. Security is increased by increasing the search space.

Auditing

One of the easiest ways to find if a Linux server had ASLR turned on was to look at the proc filesystem for the variable ‘randomize_va_space’.

cat /proc/sys/kernel/randomize_va_space

If the value returned was a zero (0) then it was off, a one (1) then it was on for the stack. Ideally you want to use the value two (2) which will also randomize the data segments but all programs need to be built as a Position Independent Executable (PIE). It will also require that all shared object libraries (.so libraries) also be built as PIE.

Another way to see if ASLR has been activated is to run ldd against your executable and inspect the load address of the shared object libraries.

$ ldd -d -r demo

linux-vdso.so.1 => (0x00007fffe2fff000)

libc.so.6 => /lib64/libc.so.6 (0x00007f3e73cd8000)

/lib64/ld-linux-x86-64.so.2 (0x00007f3e74057000)

$ ldd -d -r demo

linux-vdso.so.1 => (0x00007fff053ff000)

libc.so.6 => /lib64/libc.so.6 (0x00007f82071ac000)

/lib64/ld-linux-x86-64.so.2 (0x00007f820752b000)

Notice the change in the memory address referenced by the C library and the dynamic linker (libc and ld-Linux-x86-64)? This indicates that ASLR is in effect.

To ensure that all of your Linux code is more difficult to bypass you will want to audit your systems and look for the value of 2 (assuming your other programs fully support PIE.

root@mybox:~$ sysctl -a –pattern “randomize”

kernel.randomize_va_space = 2

Bypass

I refer to ‘Bypassing ASLR’ on the Linux system as finding a way to defeat it as a security mechanism. If all shared libraries were compiled as PIE along with all executables (services if attacking remotely and all executables if granted console access) then ASLR would be very difficult to beat but that is not the case. The additional checks required to be sure that the attack footprint has been reduced to zero is to audit each of the services publically available to make sure that all libraries and executables involved are 100% position independent.

There is a great opensource tool out there for checking if your executables support PIE.

‘CheckSec.sh’ maintained by slimm609 (https://github.com/slimm609/checksec.sh)

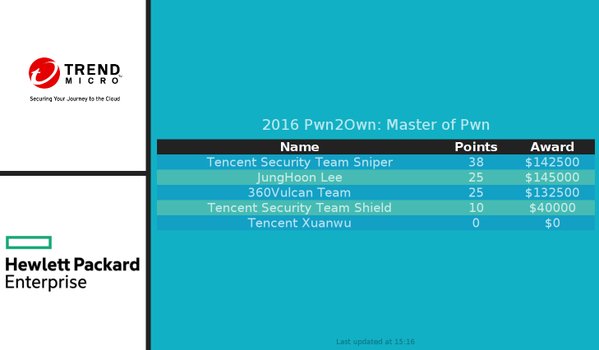

Pwn2Own – its not just for browsers…

In a spectacular effort rewarded with over $200,000, a Chinese security team that goes by the name of ‘Tencent Keen’ managed to breach most of the mobile challenges in Trend Micro’s Masters of Pwn contest in Tokyo this week (Thursday Oct 27).

In a spectacular effort rewarded with over $200,000, a Chinese security team that goes by the name of ‘Tencent Keen’ managed to breach most of the mobile challenges in Trend Micro’s Masters of Pwn contest in Tokyo this week (Thursday Oct 27).

Miss spelled and often pronounced, the term ‘pwn’ refers to the verb ‘own’ and is generally regarded as the term that best describes domination of a rival. It comes from the online video culture and has been adopted by hackers as a way to describe taking control of a computer.

Both the Tencent Keen and MWR Labs teams (creators of the Drozer and Needle frameworks) were unable to successfully demonstrate the ability to remotely install a rogue application persistently but don’t think all you Smart phone users are safe just yet. Both teams showed some success earlier and were just not able to execute it during the 20 minute testing periods.

Trend Micro still paid out almost $400.000 to help understand how these bugs work so lets hope that these vulnerabilities will be mitigated in their newest mobile security software. Learn more about the event here

0-day in every Linux system introduced by Linus himself.

Last week, in a self-proclaimed mistake made more than a decade ago, Linus Torvalds, the father of the Linux Operating system introduced a race condition that every version of Linux has today. Referred to as a Zero-day (0-day) this vulnerability affects all versions of Linux today and is described as a bug in the kernel that allows read write access to a read only memory location. More info here

Last week, in a self-proclaimed mistake made more than a decade ago, Linus Torvalds, the father of the Linux Operating system introduced a race condition that every version of Linux has today. Referred to as a Zero-day (0-day) this vulnerability affects all versions of Linux today and is described as a bug in the kernel that allows read write access to a read only memory location. More info here

Introduced to fix another bug in a system call called get_user_pages() this ‘fix by torvalds’ results in any server currently running an open service port being vulnerable to this attack. This represents a staggering amount of servers, routers, cameras, IP phones, Android Smart Phones, digital video recorders, The list is endless for the use of Linux today so swift patching is key. You may be surprised to learn that traffic control systems, high speed trains, nuclear submarines, robotic systems, fridges and stoves, play stations and even the Hadron collider runs on Linux and would also be vulnerable to this recent vulnerability.

The bug was witnessed by a keen observer who was inspecting his web server logs so there are known exploits for this 0-day publically available. The implications of this vulnerability is staggering and the press has not given this much attention. This affect EVERY Linux based system out there going back over a DECADE.

The real shame is that there are already millions of embedded devices out there that will never receive patches and will remain vulnerable to this attack!

Want more info?

Pages

Archives

- September 2025

- July 2025

- November 2024

- July 2024

- June 2024

- April 2024

- March 2024

- December 2023

- September 2023

- July 2023

- June 2023

- August 2022

- July 2022

- June 2022

- May 2022

- April 2022

- February 2022

- January 2022

- July 2020

- June 2020

- May 2020

- April 2020

- March 2020

- February 2020

- December 2019

- November 2019

- October 2019

- September 2019

- August 2019

- July 2019

- May 2019

- March 2019

- February 2019

- December 2018

- October 2018

- September 2018

- August 2018

- July 2018

- April 2018

- February 2018

- December 2016

- November 2016

- October 2016

- April 2016

- February 2016

- December 2015

- October 2015

- September 2015

- August 2015

- July 2015

- June 2015

- May 2015

- March 2015

- February 2015

- January 2015

- May 2014

- November 2013

- September 2013

- June 2013

- April 2013

- January 2013

- October 2012

- September 2012

- April 2012

- March 2012

- February 2012

- January 2012

- September 2011

- August 2011

- July 2011

- June 2011